Development Guides

API : Interfaces avec le sas

L'interaction avec le sas peut se faire via API, en mode interactive (CLI) ou en mode programmatique (SDK). Il suffit pour cela que le CLI implémente les interfaces d'AWS S3, idem pour le SDK.

Le

Etape 1: Dépot des fichiers - application des labels (BEC)

Les fichiers doivent être déposés dans le bucket sas.

Pour bénéficier des fonctionnalités du BeC, il suffit d'inclure les labels S3 voulus lors de l'ingestion du fichier vers le sas.

Par exemple, une ingestion via CLI d'un fichier "text.csv" avec pour labels ORGA = ATHEA et CLASSIFICATION = NP ce fait de la sorte:

# ingestion

mc cp -attr 'ORGA=ATHEA;CLASSIFICATION=NP'\

text.csv api/ingestion/text.csv

# vérification que les labels existent

mc stat api/ingestion/text.csv

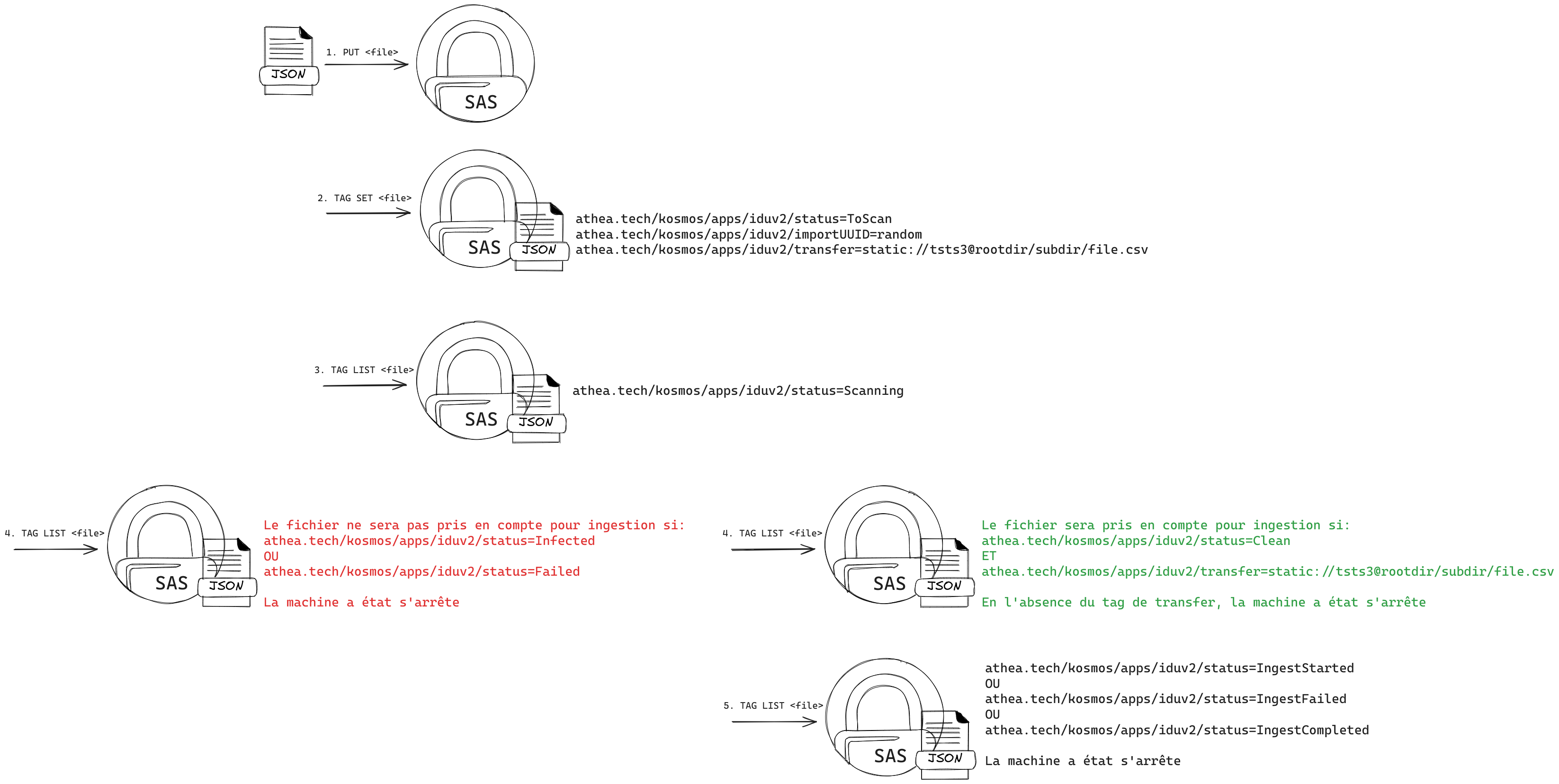

Etape 2: Sas / Ingestion

Si la connexion vers le sas se fait via STS au travers d'un token JWT, les PBAC S3 s'appliqueront et seule la pose de tag athea.tech/kosmos/apps/iduv2/status ayant pour valeur ToScan sera possible.

Une fois le fichier déposé dans le sas, vous avez la possibilité de soit :

- lancer uniquement un scan sur le fichier (tag status=ToScan)

athea.tech/kosmos/apps/iduv2/status=ToScan

athea.tech/kosmos/apps/iduv2/importUUID=specialuuid

# vérification qu'aucune action n'a été réalisé sur le fichier

mc tag list api/ingestion/text.csv

# lancement d'un scan

export SCAN_TAG="athea.tech/kosmos/apps/iduv2/status=ToScan"

export SCAN_ID="athea.tech/kosmos/apps/iduv2/importUUID=specialuuid"

# specialuuid = Un identifiant unique

mc tag set api/ingestion/text.csv "${SCAN_TAG}&${SCAN_ID}"

- ou lancer un scan puis l'ingérer vers un EdS si le fichier est propre en rajouter un tag supplémentaire (tag transfer)

athea.tech/kosmos/apps/iduv2/status=ToScan

athea.tech/kosmos/apps/iduv2/importUUID=specialuuid

athea.tech/kosmos/apps/iduv2/transfer=static://tsts3@rootdir/subdir/file.csv

# vérification qu'aucune action n'a été réalisé sur le fichier

mc tag list api/ingestion/text.csv

# lancement d'un scan

export SCAN_TAG='athea.tech/kosmos/apps/iduv2/status=ToScan'

export SCAN_ID='athea.tech/kosmos/apps/iduv2/importUUID=specialuuid'

export SCAN_DST='athea.tech/kosmos/apps/iduv2/transfer=static://tsts3@rootdir/subdir/file.csv'

# static://tsts3@rootdir/subdir/file.csv

# tsts3 = Nom d'un EdS

# specialuuid = Un identifiant unique

# rootdir/subdir/file.csv = Le nom final du fichier dans l'EdS de destination

mc tag set api/ingestion/text.csv "${SCAN_TAG}&${SCAN_ID}&${SCAN_DST}"

Etape 3: Monitoring de l'avancement du scan et de l'ingestion vers l'EdS

watch mc tag list api/ingestion/text.csv

Les valeurs possibles du tag: athea.tech/kosmos/apps/iduv2/status sont les suiventes, réparties en deux grandes étapes:

Etape 1: Suivi du scan

- ToScan: déclenchement du scan

- Scanning: le fichier est en cours de scan

- Failed: le scan n'a pas aboutit

- Clean: le fichier est propre et le scan est terminé

- Infected: le fichier est infecté et le scan est terminé

Etape 2: Suivi de l'ingestion vers l'EdS si fichier propre

- IngestStarted: la pipeline d'ingestion a démarré pour l'ingestion du fichier tagué comme étant propre

- IngestCompleted: la pipeline d'ingestion a terminé d'ingérer le fichier vers l'EdS

- IngestFailed: la pipeline d'ingestion n'a pas réussi à ingérer le fichier vers l'EdS

Etape 4: Rétention des données et nettoyage

Par défaut, les fichiers ingérés ont une rétention de 7 jours avant suppression automatique. Cette valeur est configurable lors du déploiement du sas.

Dans la mesure où vous avez besoin de lancer des suppressions car problème de disque, il est possible de se connecter à l'UI interne du sas https://scan-admin.DOMAIN (voir les procédures d'administration)

GOLANG - Exemple

AWS SDKV2

package main

import (

"context"

"fmt"

"net/url"

"os"

"path/filepath"

"time"

"github.com/aws/aws-sdk-go-v2/aws"

"github.com/aws/aws-sdk-go-v2/config"

"github.com/aws/aws-sdk-go-v2/credentials"

"github.com/aws/aws-sdk-go-v2/service/s3"

"github.com/aws/aws-sdk-go-v2/service/s3/types"

transport "github.com/aws/smithy-go/endpoints"

"github.com/google/uuid"

)

var (

user string = "minioadmin"

password string = "minioadmin"

endpoint string = "http://host.docker.internal:9000"

bucket string = "sas"

key string = "/bin/sh"

)

func main() {

var err error

var s3cfg aws.Config

if s3cfg, err = config.LoadDefaultConfig(context.Background(),

config.WithCredentialsProvider(

credentials.NewStaticCredentialsProvider(

user,

password,

"",

),

),

config.WithRegion("auto"),

//config.WithClientLogMode(aws.LogRequest|aws.LogRequestWithBody|aws.LogRequestEventMessage),

); err != nil {

return

}

// immutable hostname

var addr *url.URL

if addr, err = url.Parse(endpoint); err != nil {

return

}

client := s3.NewFromConfig(s3cfg,

s3.WithEndpointResolverV2(&staticResolver{URL: addr}),

func(o *s3.Options) {

o.UsePathStyle = true

o.BaseEndpoint = aws.String(endpoint)

},

)

var file *os.File

if file, err = os.Open(key); err != nil {

return

}

if _, err = client.PutObject(context.Background(), &s3.PutObjectInput{

Bucket: aws.String(bucket),

Key: aws.String(key),

Body: file,

Metadata: map[string]string{

"attr1": "val1",

},

}); err != nil {

panic(err)

}

if _, err = client.PutObjectTagging(context.Background(), &s3.PutObjectTaggingInput{

Bucket: aws.String(bucket),

Key: aws.String(key),

Tagging: &types.Tagging{

TagSet: []types.Tag{

{

Key: aws.String("athea.tech/kosmos/apps/iduv2/status"),

Value: aws.String("ToScan"),

},

{

Key: aws.String("athea.tech/kosmos/apps/iduv2/importUUID"),

Value: aws.String(uuid.NewString()),

},

},

},

}); err != nil {

panic(err)

}

ticker := time.NewTicker(time.Second * 1)

for range ticker.C {

var tout *s3.GetObjectTaggingOutput

if tout, err = client.GetObjectTagging(context.Background(), &s3.GetObjectTaggingInput{

Bucket: aws.String(bucket),

Key: aws.String(key),

}); err != nil {

panic(err)

}

for _, tag := range tout.TagSet {

tag := tag

key := *tag.Key

value := *tag.Value

if key == "athea.tech/kosmos/apps/iduv2/status" {

switch value {

case "Clean", "IngestStarted", "IngestFailed", "IngestCompleted":

fmt.Println("No virus detected")

return

case "Infected":

fmt.Println("Virus detected")

return

case "Failed":

fmt.Println("Failed")

return

default:

fmt.Println("Scanning...")

}

}

}

}

}

type staticResolver struct {

URL *url.URL

}

func (r *staticResolver) ResolveEndpoint(

_ context.Context,

params s3.EndpointParameters,

) (transport.Endpoint, error) {

u := *r.URL

u.Path = filepath.Join(u.Path, *params.Bucket)

return transport.Endpoint{URI: u}, nil

}

TypeScript - Exemple

Le script s3UploadDemoFn.ts suivant expose les fonctions suivantes :

PutObjectAndTag: Pour uploader un petit fichier et le tagguer afin qu'il soit scanné puis ingéré (copié sur un EdS de destination).MultipartUploadAndTag: Pour uploader en multipart un fichier plus conséquent et le tagguer afin qu'il soit scanné puis ingéré (copié sur un EdS de destination).AuthSts: permet de s'authentifier avec STS en utilisant le token JWT de l'utilisateur.

Il a été testé avec les versions suivantes des dépendances :

{

"dependencies": {

"@aws-sdk/client-s3": "^3.635.0",

"@aws-sdk/types": "^3.609.0",

"@types/crypto-js": "^4.2.2",

"@xstate/react": "^5.0.0",

"crypto-js": "^4.2.0",

"fast-xml-parser": "^4.5.0",

"nanoid": "^5.0.8",

}

}

import {

CompleteMultipartUploadCommand,

CompletedMultipartUpload,

CreateMultipartUploadCommand,

CreateMultipartUploadRequest,

PutObjectCommand,

PutObjectTaggingCommand,

S3Client,

S3ClientConfig,

UploadPartCommand,

UploadPartCommandInput,

UploadPartCommandOutput,

} from '@aws-sdk/client-s3';

import { AwsCredentialIdentity } from '@aws-sdk/types';

import { algo, enc, lib } from 'crypto-js';

import { XMLParser } from 'fast-xml-parser';

export type MultipartUploadFileHandler = {

bytesSent: number;

uploadPartResults: Record<number, UploadPartCommandOutput>;

uploadPartChecksum: Record<number, lib.WordArray>;

};

// Upload a small file using PutObjectCommand and a tag it

export const PutObjectAndTag = async (

s3Endpoint: string,

importId: string,

file: File,

s3Credentials: AwsCredentialIdentity,

sasBucketName: string,

destBucketName: string,

destObjectKey: string,

metadata: Record<string, string> | undefined,

) => {

const s3Config: S3ClientConfig = {

// not used by minio

region: 'us-east-1',

credentials: s3Credentials,

endpoint: s3Endpoint,

forcePathStyle: true,

};

const s3client = new S3Client(s3Config);

const arrayBuffer = await file.arrayBuffer();

const command = new PutObjectCommand({

Bucket: sasBucketName,

Key: destObjectKey,

Body: new Uint8Array(arrayBuffer),

ContentType: file.type,

Metadata: metadata,

});

// put object

const response = await s3client.send(command);

// and put tags

await putObjectTagging(s3client, importId, sasBucketName, destBucketName, destObjectKey);

return response;

};

// Put tags in order to trigger scan and ingestion

const putObjectTagging = async (

s3client: S3Client,

importId: string,

sasBucketName: string,

destBucketName: string,

destObjectKey: string,

) => {

const tags = [

{

Key: 'athea.tech/kosmos/apps/iduv2/status',

Value: 'ToScan',

},

{

Key: 'athea.tech/kosmos/apps/iduv2/cls',

Value: 'TOP_SECRET',

},

{

Key: 'athea.tech/kosmos/apps/iduv2/importUUID',

Value: importId,

},

{

Key: 'athea.tech/kosmos/apps/iduv2/transfer',

Value: `static://${destBucketName}@/`,

},

];

const tagCommand = new PutObjectTaggingCommand({

Bucket: sasBucketName,

Key: destObjectKey,

Tagging: {

TagSet: tags,

},

});

return s3client.send(tagCommand);

};

const parser = new XMLParser();

// Authenticate using STS

export const AuthSts = async (endpoint: string, jwt: string): Promise<AwsCredentialIdentity | undefined> => {

const data = new URLSearchParams();

data.append('Action', 'AssumeRoleWithWebIdentity');

data.append('Version', '2011-06-15');

data.append('DurationSeconds', '3600');

data.append('claim_name', 'policy');

data.append('WebIdentityToken', jwt);

const response = await fetch(endpoint, {

method: 'POST',

headers: {

'Content-Type': 'application/x-www-form-urlencoded',

},

body: data.toString(),

});

if (response.ok) {

const responseText = await response.text();

const xmlAsJson = parser.parse(responseText);

if (xmlAsJson) {

return {

accessKeyId:

xmlAsJson.AssumeRoleWithWebIdentityResponse.AssumeRoleWithWebIdentityResult.Credentials.AccessKeyId,

secretAccessKey:

xmlAsJson.AssumeRoleWithWebIdentityResponse.AssumeRoleWithWebIdentityResult.Credentials.SecretAccessKey,

sessionToken:

xmlAsJson.AssumeRoleWithWebIdentityResponse.AssumeRoleWithWebIdentityResult.Credentials.SessionToken,

};

}

} else {

const responseText = await response.text();

const xmlAsJson = parser.parse(responseText);

if (xmlAsJson) {

throw new Error(xmlAsJson.ErrorResponse.Error.Message);

}

}

};

// Upload large files using multipart uplopad

export const MultipartUploadAndTag = async (

s3Endpoint: string,

importId: string,

file: File,

s3Credentials: AwsCredentialIdentity,

sasBucketName: string,

destBucketName: string,

destObjectKey: string,

metadata: Record<string, string> | undefined,

maxChunkSize: number,

) => {

const s3Config: S3ClientConfig = {

region: 'us-east-1',

credentials: s3Credentials,

endpoint: s3Endpoint,

forcePathStyle: true,

};

const s3client = new S3Client(s3Config);

const ufh: MultipartUploadFileHandler = {

uploadPartResults: {},

uploadPartChecksum: {},

bytesSent: 0,

};

const params: CreateMultipartUploadRequest = {

Bucket: sasBucketName,

Key: destObjectKey,

ACL: 'public-read',

ContentType: file.type,

StorageClass: 'STANDARD',

Metadata: metadata,

// ChecksumAlgorithm: 'SHA256',

};

const command = new CreateMultipartUploadCommand(params);

const data = await s3client.send(command);

if (data.UploadId) {

return await uploadFileByChunks(

file,

importId,

sasBucketName,

destBucketName,

maxChunkSize,

ufh,

destObjectKey,

data.UploadId,

s3client,

);

} else {

throw new Error('No uploadId returned');

}

};

// Upload a file by reading bytes and truncate it by parts

const uploadFileByChunks = async (

file: File,

importId: string,

Bucket: string,

destBucketName: string,

maxChunkSize: number,

ufh: MultipartUploadFileHandler,

Key: string,

UploadId: string,

client: S3Client,

) => {

const fileStream = file.stream();

const streamReader = fileStream.getReader();

let partNumber = 1;

let finished = false;

const buffer: Uint8Array = new Uint8Array(maxChunkSize);

let currentBufferOffset = 0;

while (!finished) {

const { done, value: data } = await streamReader.read();

if (done) {

finished = true;

}

if (data) {

const remainingBufferSpace = maxChunkSize - currentBufferOffset;

if (remainingBufferSpace >= data.byteLength) {

// enough place in buffer, let's fill it

buffer.set(data, currentBufferOffset);

currentBufferOffset += data.byteLength;

} else {

// no enough place in buffer

buffer.set(data.slice(0, remainingBufferSpace), currentBufferOffset);

await uploadChunk(Bucket, buffer.slice(0, currentBufferOffset), partNumber, client, Key, UploadId, ufh);

partNumber++;

// put remaining data in buffer

currentBufferOffset = 0;

const remainingData = data.slice(remainingBufferSpace);

buffer.set(remainingData, currentBufferOffset);

currentBufferOffset += remainingData.byteLength;

}

}

if (finished && currentBufferOffset > 0) {

// send the last chunk

await uploadChunk(Bucket, buffer.slice(0, currentBufferOffset), partNumber, client, Key, UploadId, ufh);

}

}

if (Object.keys(ufh.uploadPartResults).length < partNumber) {

const msg = `${Object.keys(ufh.uploadPartResults).length} < ${partNumber} : less result than part number, maybe some part upload has failed`;

throw new Error(msg);

} else {

// Calculate the whole file ETag checksum (MD5 hash of chunk MD5 hashes)

const MD5 = algo.MD5.create();

for (let i = 1; i <= partNumber; i++) {

MD5.update(ufh.uploadPartChecksum[i]);

}

const checksumTag = '"' + MD5.finalize().toString(enc.Hex) + '-' + partNumber + '"';

const MultipartUpload: CompletedMultipartUpload = { Parts: [] };

MultipartUpload.Parts = Object.keys(ufh.uploadPartResults).map((k) => {

const partNumber: number = +k;

return { ETag: ufh.uploadPartResults[partNumber].ETag, PartNumber: partNumber };

});

const command = new CompleteMultipartUploadCommand({

Bucket,

Key,

UploadId,

MultipartUpload,

});

const result = await client.send(command);

if (result.ETag != checksumTag) {

throw new Error('Etag is not same as expected, man in the middle attack suspicion !');

} else {

return await putObjectTagging(client, importId, Bucket, destBucketName, Key);

}

}

};

// Upload a chunk

const uploadChunk = async (

Bucket: string,

Body: Uint8Array,

PartNumber: number,

client: S3Client,

Key: string,

UploadId: string,

ufh: MultipartUploadFileHandler,

) => {

try {

// Calculate SHA256 checksum for chunk

const wordBuffer = lib.WordArray.create(Body);

const SHA256 = algo.SHA256.create();

const checksumBytes = SHA256.update(wordBuffer).finalize();

const ChecksumSHA256 = checksumBytes.toString(enc.Base64);

const MD5 = algo.MD5.create();

const md5checksumBytes = MD5.update(wordBuffer).finalize();

console.log(`Sending chunk ${PartNumber} of size ${Body.byteLength} bytes`);

const uploadParam: UploadPartCommandInput = {

Bucket,

UploadId,

PartNumber,

Key,

Body,

ChecksumSHA256,

};

const command = new UploadPartCommand(uploadParam);

const uploadPartOutput = await client.send(command);

ufh.uploadPartResults[PartNumber] = uploadPartOutput;

ufh.uploadPartChecksum[PartNumber] = md5checksumBytes;

ufh.bytesSent += Body.byteLength;

} catch (error) {

// An error occured while uploading part

console.log(`an error occured while uploading part ${PartNumber}`, error);

// We don't want to throw an error (we should manage retry or abort)

}

};

React-JS - Exemple

Le composant React suivant affiche une page permettant d'uploader un fichier :

- il y a deux modes d'authentification possible : soit en utilisant directement les credentials minio, soit en passant par STS en utilisant un token JWT

- il y a deux modes d'upload au choix : soit PutObject pour des petits fichiers, soit multipart pour des fichiers plus conséquents.

Il utilise le script s3UploadDemoFn.ts ci dessus.

Il a été testé avec les dépendances suivantes :

{

"dependencies": {

"@aws-sdk/client-s3": "^3.635.0",

"@aws-sdk/types": "^3.609.0",

"@types/crypto-js": "^4.2.2",

"@xstate/react": "^5.0.0",

"crypto-js": "^4.2.0",

"fast-xml-parser": "^4.5.0",

"nanoid": "^5.0.8",

"pretty-bytes": "^6.1.1",

"primeflex": "^3.3.1",

"primeicons": "^6.0.1",

"primereact": "10.2.1",

"react": "^18.2.0",

"react-dom": "^18.2.0",

"react-hook-form": "^7.51.0",

"react-i18next": "^14.0.1",

"react-router-dom": "^6.20.1"

}

}

S3UploadDemo.tsx :

import { nanoid } from 'nanoid';

import { FileUpload, FileUploadHandlerEvent, ItemTemplateOptions } from 'primereact/fileupload';

import { InputText } from 'primereact/inputtext';

import { useEffect, useState } from 'react';

import { PutObjectAndTag, AuthSts, MultipartUploadAndTag } from './s3UploadDemoFn';

import prettyBytes from 'pretty-bytes';

import { InputTextarea } from 'primereact/inputtextarea';

import { InputSwitch } from 'primereact/inputswitch';

import { Slider } from 'primereact/slider';

import { classNames } from 'primereact/utils';

export const S3UploadDemo = () => {

const [files, setFiles] = useState<File[]>();

// Form states

const [sasBucketName, setSasBucketName] = useState<string>('sas');

const [destBucketName, setDestBucketName] = useState<string>('test');

const [s3Endpoint, setS3Endpoint] = useState<string>('http://localhost:9000');

const [importId, setImportId] = useState<string>(nanoid(8));

const [destObjectKey, setDestObjectKey] = useState<string>();

const [metadata, setMetadata] = useState<string>('{"meta": "data"}');

const [s3accesskeyid, setS3accesskeyid] = useState<string>('minioadmin');

const [s3secretaccesskey, setS3secretaccesskey] = useState<string>('minioadmin');

const [jwt, setJwt] = useState<string>('');

const [useSts, setUseSts] = useState<boolean>(false);

const [useMultipart, setUseMultipart] = useState<boolean>(false);

const [chunkSize, setChunkSize] = useState(100000000);

// Feedback states

const [fileStatus, setFileStatus] = useState<'pending' | 'uploading' | 'success' | 'error'>();

const [message, setMessage] = useState<string>();

useEffect(() => {

if (files && files.length > 0) {

const file = files[0];

setDestObjectKey(importId + '/' + file.name);

}

}, [files, importId]);

const customUploader = async (event: FileUploadHandlerEvent) => {

const file = event.files[0];

setFileStatus('uploading');

setMessage(undefined);

let s3credentials = {

accessKeyId: s3accesskeyid,

secretAccessKey: s3secretaccesskey,

};

if (useSts) {

try {

const stsCreds = await AuthSts(s3Endpoint, jwt);

if (!stsCreds) {

setFileStatus('error');

setMessage('Not able to authenticate');

return;

} else {

s3credentials = stsCreds;

}

} catch (error) {

setFileStatus('error');

if (error instanceof Error) {

setMessage(error.message);

} else {

setMessage(String(error));

}

return;

}

}

let metadataRecord = undefined;

try {

const parsedMetadata = JSON.parse(metadata);

metadataRecord = parsedMetadata as Record<string, string>;

} catch (error) {

console.log('non parsable metadata');

}

if (useMultipart) {

MultipartUploadAndTag(

s3Endpoint,

importId,

file,

s3credentials,

sasBucketName,

destBucketName,

destObjectKey || file.name,

metadataRecord,

chunkSize,

)

.then((result) => {

setFileStatus('success');

setMessage(JSON.stringify(result));

})

.catch((error) => {

setFileStatus('error');

if (error instanceof Error) {

setMessage(error.message);

} else {

setMessage(String(error));

}

});

} else {

PutObjectAndTag(

s3Endpoint,

importId,

file,

s3credentials,

sasBucketName,

destBucketName,

destObjectKey || file.name,

metadataRecord,

)

.then((result) => {

setFileStatus('success');

setMessage(JSON.stringify(result));

})

.catch((error) => {

setFileStatus('error');

if (error instanceof Error) {

setMessage(error.message);

} else {

setMessage(String(error));

}

});

}

};

const itemTemplate = (file: any, options: ItemTemplateOptions) => {

const getBgFromStatus = () => {

switch (fileStatus) {

case 'pending':

return 'yellow';

case 'success':

return 'green';

case 'uploading':

return 'blue';

case 'error':

return 'red';

default:

return 'gray';

}

};

const getIconFromStatus = () => {

switch (fileStatus) {

case 'pending':

return 'pi pi-file';

case 'success':

return 'pi pi-thumbs-up-fill';

case 'uploading':

return 'pi pi-spin pi-spinner';

case 'error':

return 'pi pi-exclamation-triangle';

default:

return 'gray';

}

};

return (

<div className={classNames('bg-' + getBgFromStatus() + '-100', 'border-round-md')}>

<div className="flex justify-content-between">

<span>

{file.name} ({prettyBytes(file.size)})

</span>

<span>{fileStatus}</span>

<i className={getIconFromStatus()}></i>

</div>

{message && (

<div className="mt-4">

<InputTextarea className="w-full" value={message} readOnly={true} rows={15} />

</div>

)}

</div>

);

};

return (

<div className="flex flex-column w-full m-4 align-items-center">

<h1>S3 file upload demo</h1>

<div className="card w-7">

<div className="flex flex-column gap-1 m-4">

<div className="flex justify-content-between flex-wrap">

<div className="">S3 Enpoint</div>

<div className="text-color-secondary font-italic">

URL of the minio endpoint (aboslute or relative if reverse proxyfied)

</div>

</div>

<InputText

className="w-full"

id="s3endpoint"

value={s3Endpoint}

onChange={(e) => setS3Endpoint(e.target.value)}

/>

</div>

<div className="flex flex-column gap-1 m-4">

<div className="flex flex-row justify-content-between align-content-center flex-wrap">

<div>Use STS auth</div>

<InputSwitch checked={useSts} onChange={(e) => setUseSts(e.value)} />

<div className="text-color-secondary font-italic">

Authenticate using STS sending your JWT token to minio

</div>

</div>

</div>

{!useSts && (

<>

<div className="flex flex-column gap-1 m-4">

<div className="flex justify-content-between flex-wrap">

<div>S3 Access Key ID</div>

<div className="text-color-secondary font-italic">The minio username (if not using STS)</div>

</div>

<InputText

className="w-full"

id="s3accesskeyid"

value={s3accesskeyid}

onChange={(e) => setS3accesskeyid(e.target.value)}

disabled={useSts}

/>

</div>

<div className="flex flex-column gap-1 m-4">

<div className="flex justify-content-between flex-wrap">

<div>S3 Access Key Secret</div>

<div className="text-color-secondary font-italic">The minio password (if not using STS)</div>

</div>

<InputText

className="w-full"

id="s3secretaccesskey"

value={s3secretaccesskey}

onChange={(e) => setS3secretaccesskey(e.target.value)}

disabled={useSts}

/>

</div>

</>

)}

{useSts && (

<div className="flex flex-column gap-1 m-4">

<div className="flex justify-content-between flex-wrap">

<div>JWT Token</div>

<div className="text-color-secondary font-italic">The JWT token fot STS authentication</div>

</div>

<InputTextarea className="w-full" id="metadata" value={jwt} onChange={(e) => setJwt(e.target.value)} />

</div>

)}

<div className="flex flex-column gap-1 m-4">

<div className="flex flex-row justify-content-between flex-wrap align-content-center">

<div>Use Multipart Upload</div>

<InputSwitch checked={useMultipart} onChange={(e) => setUseMultipart(e.value)} />

<div className="text-color-secondary font-italic">

For files bigger than 5 Mo, it's recommended to use multipart upload

</div>

</div>

</div>

{useMultipart && (

<div className="flex flex-column gap-1 m-4">

<div className="flex justify-content-between flex-wrap">

<div>Multipart chunk size: {prettyBytes(chunkSize)}</div>

<div className="text-color-secondary font-italic">

The maximum size of the parts to be uploaded when using multipart upload

</div>

</div>

<Slider

value={chunkSize}

onChange={(e) => setChunkSize(Array.isArray(e.value) ? e.value[0] : e.value)}

step={1000000}

min={6000000}

max={500000000}

/>

</div>

)}

<div className="flex flex-column gap-1 m-4">

<div className="flex justify-content-between flex-wrap">

<div>sas bucket name</div>

<div className="text-color-secondary font-italic">The name of the bucket that is the antivirus gateway</div>

</div>

<InputText

className="w-full"

id="sasBucketName"

value={sasBucketName}

onChange={(e) => setSasBucketName(e.target.value)}

/>

</div>

<div className="flex flex-column gap-1 m-4">

<div className="flex justify-content-between flex-wrap">

<div>Destination bucket name</div>

<div className="text-color-secondary font-italic">

Where to put the object after it has been analysed by antivirus

</div>

</div>

<InputText

className="w-full"

id="destBucketName"

value={destBucketName}

onChange={(e) => setDestBucketName(e.target.value)}

/>

</div>

<div className="flex flex-column gap-1 m-4">

<div className="flex justify-content-between flex-wrap">

<div>Import Id</div>

<div className="text-color-secondary font-italic">An ID for the import (unique or not)</div>

</div>

<InputText className="w-full" id="importId" value={importId} onChange={(e) => setImportId(e.target.value)} />

</div>

<div className="flex flex-column gap-1 m-4">

<div className="flex justify-content-between flex-wrap">

<div>Metadata</div>

<div className="text-color-secondary font-italic">

An JSON representation of a <span>Record<string, string></span> for metadata

</div>

</div>

<InputTextarea

className="w-full"

id="metadata"

value={metadata}

onChange={(e) => setMetadata(e.target.value)}

/>

</div>

<div className="flex flex-column gap-1 m-4">

<div className="flex justify-content-between flex-wrap">

<div>Destination object key</div>

<div className="text-color-secondary font-italic">

The path of the destination object, including path and object name ie: /path/to/the/object

</div>

</div>

<InputText

className="w-full"

id="destObjectKey"

value={destObjectKey}

onChange={(e) => setDestObjectKey(e.target.value)}

placeholder="Key of the dest object (including path), will use file name if empty"

/>

</div>

<div className="m-4">

<FileUpload

onSelect={(e) => {

setMessage(undefined);

setFiles(e.files);

if (e.files) {

setFileStatus('pending');

}

}}

mode="advanced"

uploadLabel="Upload"

chooseLabel="Select a file"

customUpload

uploadHandler={customUploader}

itemTemplate={itemTemplate}

emptyTemplate={<p className="m-0">Drag and drop files to here to upload.</p>}

/>

</div>

</div>

</div>

);

};

Python - Exemple

AWS SDKV2 (boto3)

user: str = "minioadmin"

password: str = "minioadmin"

endpoint: str = "http://host.docker.internal:9000"

bucket: str = "sas"

key: str = "/bin/sh"

if __name__ == "__main__":

from boto3 import resource

from time import sleep

from uuid import uuid4

c = resource(

service_name="s3",

verify=False,

use_ssl=False,

endpoint_url=endpoint,

aws_access_key_id=user,

aws_secret_access_key=password,

)

c.Bucket(name=bucket).upload_file(

Filename=key,

Key=key,

ExtraArgs={

"Metadata": {

"attr1": "val1", # labels

},

},

)

c.meta.client.put_object_tagging(

Bucket=bucket,

Key=key,

Tagging={

"TagSet": [

{

"Key": "athea.tech/kosmos/apps/iduv2/status",

"Value": "ToScan",

},

{

"Key": "athea.tech/kosmos/apps/iduv2/importUUID",

"Value": str(uuid4()),

},

],

},

)

scanning = True

while scanning:

tags = c.meta.client.get_object_tagging(

Bucket=bucket,

Key=key,

)

tagsets = tags["TagSet"]

sleep(1)

for t in tagsets:

if t["Key"] == "athea.tech/kosmos/apps/iduv2/status":

match t["Value"]:

case "Clean" | "IngestStarted" | "IngestFailed" | "IngestCompleted":

print("File is not infected")

scanning = False

break

case "Infected":

print("File is infected")

scanning = False

break

case "Failed":

print("Failed")

scanning = False

break

case _:

print("Scanning...")

Minio Client (MC) - Exemple

mc alias set api http://host.docker.internal:9000 minioadmin minioadmin

# copy a file to sas with labels

mc cp -attr 'l1=l1,l2=l2' /bin/sh api/sas/

export TOSCAN="athea.tech/kosmos/apps/iduv2/status=ToScan"

export IMPORTUUID="athea.tech/kosmos/apps/iduv2/importUUID=specialuuid"

# scan file

mc tag set api/sas/bin/sh "${TOSCAN}&${IMPORTUUID}"

# see result

watch mc tag list api/sas/bin/sh

Java - Exemple

AWS SDKV2

package athea.tech;

import java.net.URI;

import java.net.URISyntaxException;

import java.nio.file.Paths;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

import java.util.UUID;

import java.util.concurrent.CompletableFuture;

import java.util.concurrent.ExecutionException;

import software.amazon.awssdk.auth.credentials.AwsBasicCredentials;

import software.amazon.awssdk.auth.credentials.StaticCredentialsProvider;

import software.amazon.awssdk.core.async.AsyncRequestBody;

import software.amazon.awssdk.core.internal.http.loader.DefaultSdkAsyncHttpClientBuilder;

import software.amazon.awssdk.http.SdkHttpConfigurationOption;

import software.amazon.awssdk.regions.Region;

import software.amazon.awssdk.services.s3.S3AsyncClient;

import software.amazon.awssdk.services.s3.model.GetObjectTaggingRequest;

import software.amazon.awssdk.services.s3.model.GetObjectTaggingResponse;

import software.amazon.awssdk.services.s3.model.PutObjectRequest;

import software.amazon.awssdk.services.s3.model.PutObjectResponse;

import software.amazon.awssdk.services.s3.model.PutObjectTaggingRequest;

import software.amazon.awssdk.services.s3.model.PutObjectTaggingResponse;

import software.amazon.awssdk.services.s3.model.Tag;

import software.amazon.awssdk.services.s3.model.Tagging;

import software.amazon.awssdk.utils.AttributeMap;

/**

* DEMO: sas API

*/

public class Main {

public static String ENDPOINT = "http://host.docker.internal:9000";

public static String USER = "minioadmin";

public static String PASSWORD = "minioadmin";

public static String BUCKET = "sas";

public static String KEY = "/bin/sh";

public static void main(String... args) throws URISyntaxException, InterruptedException, ExecutionException {

final AttributeMap attributeMap = AttributeMap.builder()

.put(SdkHttpConfigurationOption.TRUST_ALL_CERTIFICATES, true)

.build();

AwsBasicCredentials creds = AwsBasicCredentials.create(USER, PASSWORD);

S3AsyncClient s3 = S3AsyncClient.builder()

.endpointOverride(new URI(ENDPOINT))

.credentialsProvider(StaticCredentialsProvider.create(creds))

.forcePathStyle(true)

.region(Region.US_EAST_1)

.httpClient(new DefaultSdkAsyncHttpClientBuilder()

.buildWithDefaults(attributeMap)

)

.build();

scan(s3);

}

public static void scan(S3AsyncClient s3) throws InterruptedException, ExecutionException {

Map<String, String> labels = new HashMap<>();

labels.put("l1", "v1");

PutObjectRequest objectRequest = PutObjectRequest.builder()

.bucket(BUCKET)

.key(KEY)

.metadata(labels)

.build();

CompletableFuture<PutObjectResponse> future = s3.putObject(objectRequest,

AsyncRequestBody.fromFile(Paths.get(KEY)));

future.whenComplete((resp, err) -> {

if (resp != null) {

System.out.println("Object uploaded. Details: " + resp);

} else {

err.printStackTrace();

}

});

future.join();

Tagging tags = Tagging.builder()

.tagSet(

Tag.builder()

.key("athea.tech/kosmos/apps/iduv2/status")

.value("ToScan").build(),

Tag.builder()

.key("athea.tech/kosmos/apps/iduv2/importUUID")

.value(UUID.randomUUID().toString()).build())

.build();

PutObjectTaggingRequest objectTaggingRequest = PutObjectTaggingRequest.builder()

.bucket(BUCKET)

.key(KEY)

.tagging(tags)

.build();

CompletableFuture<PutObjectTaggingResponse> futureScan = s3.putObjectTagging(objectTaggingRequest);

futureScan.whenComplete((resp, err) -> {

if (resp != null) {

System.out.println("Scan started...");

} else {

err.printStackTrace();

}

});

futureScan.join();

GetObjectTaggingRequest gObjectTaggingRequest = GetObjectTaggingRequest.builder()

.bucket(BUCKET)

.key(KEY)

.build();

Boolean isScanning = Boolean.TRUE;

while (isScanning) {

Thread.sleep(1000);

CompletableFuture<GetObjectTaggingResponse> res = s3.getObjectTagging(gObjectTaggingRequest);

List<Tag> tagset = res.join().tagSet();

for (Tag t : tagset) {

if (t.key().equals("athea.tech/kosmos/apps/iduv2/status")) {

switch (t.value()) {

case "Clean":

case "IngestStarted":

case "IngestCompleted":

case "IngestFailed":

System.out.println("Clean");

isScanning = Boolean.FALSE;

break;

case "Infected":

System.out.println("Infected");

isScanning = Boolean.FALSE;

break;

case "Failed":

System.out.println("Failed");

isScanning = Boolean.FALSE;

break;

default:

System.out.println("Scanning...");

break;

}

}

}

}

}

}